April 6, 2026

Key takeaways:

- The Formative Writing Framework blends new technology and assessment literacy with proven teaching practices.

- Across Framework sites in 2025–26, students’ ELA proficiency increased by 10–20 percentage points, with the largest gains driven by improved writing.

- The Framework helps schools make better use of their existing tools and fill gaps with model lessons, AI-based scoring and planning, on-site coaching, and deeper data insights.

Recent headlines about student reading and writing proficiency in the United States are startling. Beyond the headlines, there’s a larger story at play involving technology and accountability. Although technology has changed the way we communicate, high-stakes assessments are still asking students to demonstrate sustained, evidence-based writing rather than just answering questions about writing.

Susan Levenson is a school and district improvement facilitator and program manager at WestEd. Recognizing that this new landscape is uncharted territory for many schools and districts, Levenson led the development of WestEd’s Formative Writing Framework, a next-generation writing program. The main benefit of the Framework is that it draws on engaging performance tasks to generate actionable data that teachers can use to strengthen instruction and improve student outcomes.

In this Q&A, she discusses the challenges educators face today and how the Formative Writing Framework bridges classroom writing instruction and accountability. Read on for her insights into building students’ writing proficiency.

This interview has been lightly edited for clarity.

On the technology side, students are growing up in a very different literacy environment. They’re reading and writing constantly, but it’s fragmented, fast, and often informal. We see shorter attention spans, different grammar norms, and less sustained engagement with complex text. There’s also substantial cognitive research around handwriting versus keyboarding and how that affects processing and retention.

At the same time, accountability systems have brought writing back into focus, but very quickly. For a long time, especially in the No Child Left Behind era, writing was almost invisible in high-stakes assessment systems. When it was assessed, it was often through multiple-choice questions about writing, not actual writing.

Now writing performance tasks are back, and they’re heavily weighted. But schools haven’t always had time to build deep assessment literacy around writing. So, when we help schools disaggregate their writing data, they often see something shocking: very high rates of zero score or nonscorable submissions.

What we do is help schools understand exactly how writing is driving overall literacy outcomes, and then we use modern technology to solve the feedback, practice, and data problems that technology helped create in the first place.

When students learn exactly what these [performance] tasks require, practice realistic tasks repeatedly, and get targeted instruction on high-impact skills, improvement can happen quickly.

At its core, the Framework starts with a very simple idea: If we want better outcomes, everyone has to understand—both teachers and students—how writing is actually being assessed.

So, we start with assessment literacy and backward design. We look very carefully at how writing is assessed, what standards are being measured, and what the summative tasks actually look like both in quality adopted curricula and on high-stakes assessments. Then we build instruction from there.

What that looks like in practice is that writing assessments stop feeling mysterious. Teachers understand what the data is telling them. Students understand what strong academic writing actually looks like. And they get repeated experience with the types of writing they’ll see on important academic writing performance tasks. As you can imagine, this also has a positive impact on teachers’ ability to define, identify, and create higher quality formative writing opportunities for their students throughout the year.

At the system level, we help schools build shared expectations, including shared rubrics, shared task structures, and shared language about writing. But we do that with teachers, not to teachers. They keep what works. We help fill the gaps and align everything.

The result is less guesswork and more confidence, and usually much more accurate proficiency outcomes.

The needs assessment is honestly one of my favorite parts of the work, because it’s where schools often have their biggest “aha” moments.

There are two big pieces. One is longitudinal writing data analysis. The other is a really practical review of what tools, materials, and practices are already in place.

What we usually find is that writing is much more heavily weighted in high-stakes English language arts (ELA) assessments than people realize. And when we disaggregate writing data, we often see surprisingly high rates of zero score or nonscorable submissions.

Students get zeroes for very fixable reasons—for example, blank responses, writing too little, being off topic or off genre, copying too much text, writing in the wrong language. But if a writing task is worth 20 percent or more of a final score, and 30 to 50 percent of students are getting zeroes, that can dominate a school’s entire ELA profile.

The good news is that this is very solvable. When students learn exactly what these tasks require, practice realistic tasks repeatedly, and get targeted instruction on high-impact skills, improvement can happen quickly.

We’ve worked with schools that saw double-digit ELA proficiency gains simply by eliminating nonscorable submissions. For schools that have struggled for years, this can be transformative.

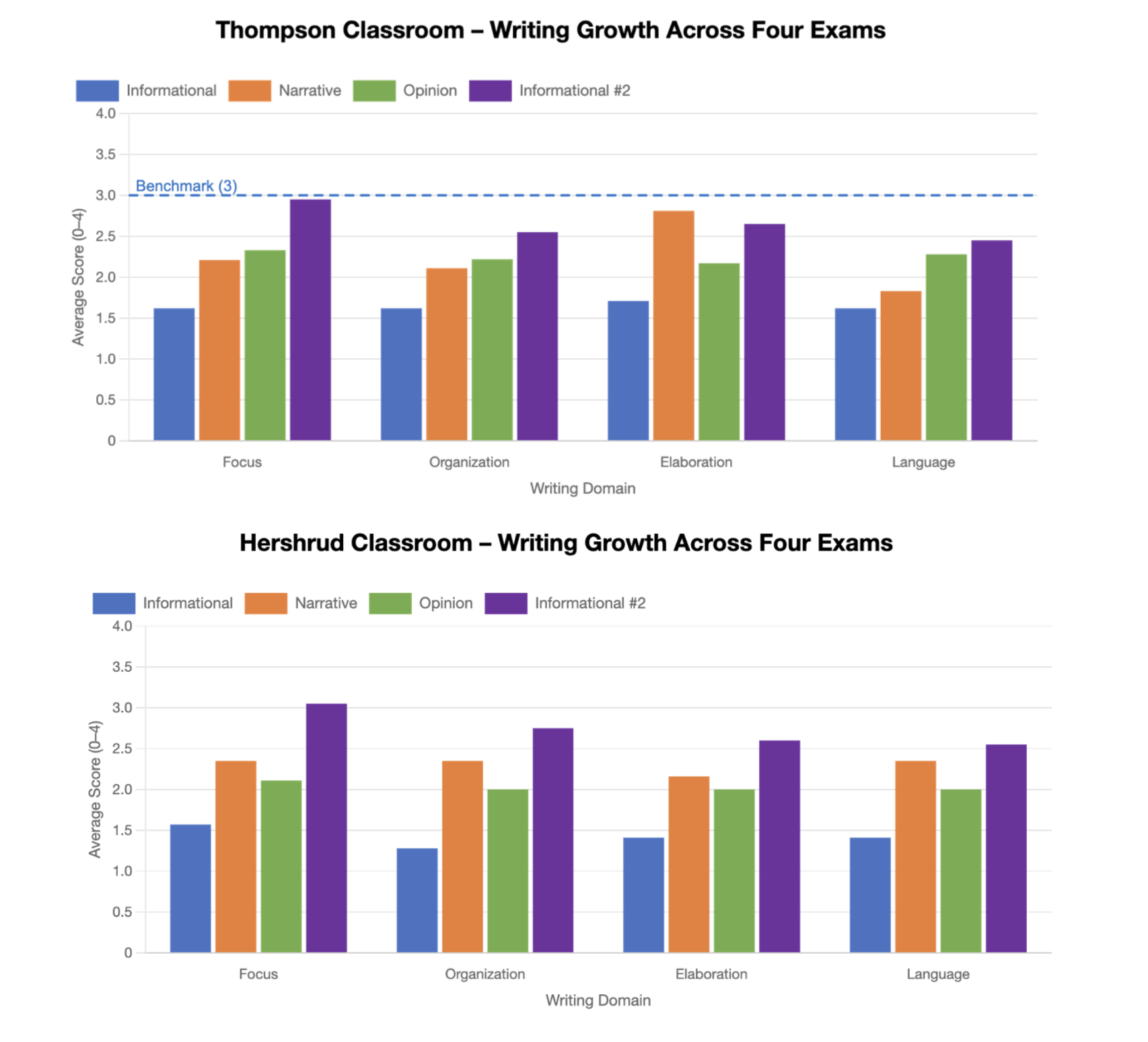

The chart above shows student writing growth across multiple assessments, with domain-level data used to guide instruction and support improved end-of-year outcomes.

For us, rubrics and performance tasks are really the backbone of consistent writing instruction.

Rubrics give students and teachers a stable definition of quality. When students see the same expectations year after year around focus, organization, elaboration, and language, writing stops feeling subjective or mysterious. It becomes something they can learn and control.

Performance tasks are where students build real academic writing stamina. These tasks require students to read, think, gather evidence, and respond in structured ways. When students have never seen that format before, it can be overwhelming. But when they practice it all year, they build confidence and fluency.

Students learn how to analyze prompts, use evidence, manage time, and make decisions about how to read source material. And because we can now use reliable AI scoring tools to provide near-instant feedback, students can revise and improve while the learning experience is still fresh.

We also work very intentionally to integrate this iterative performance task assessment system into existing assessment calendars, so it doesn’t feel like “one more thing” for teachers. And we use AI tools to help generate tasks that are structurally aligned to real assessment blueprints.

Historically, one of the biggest barriers to writing instruction was time. Teachers were being asked to score hundreds of essays and do it consistently. That’s one reason writing data got a reputation for being too subjective.

What we try to do is preserve the best parts of collaborative scoring, which means teachers work together to calibrate expectations and talk about student work while using AI to handle the high-volume scoring work.

AI tools now allow students to get very fast, very detailed feedback. This allows teachers to see growth trends automatically. And maybe most importantly, the tools give teachers time back, including time to conference with students and actually teach writing.

That said, we always emphasize that human calibration still matters. Teachers periodically score together and compare their work with AI scoring to keep everything aligned and trustworthy.

What we often see is a cultural shift: Writing stops being something isolated in individual classrooms and becomes shared professional practice.

Once you build a system like this, implementation support is everything. You can’t hand people a framework and walk away.

Our coaches are site-specific, so they build real relationships with teachers. And they provide very practical support, facilitating professional learning communities around student work, helping to design tasks, helping to interpret data, supporting AI scoring implementation, modeling lessons, and providing one-on-one coaching.

Sustainable writing improvement requires embedded support over time—not just a training, but a partnership.

And one thing I always like to emphasize is that while people often associate this work with grades 3 and up, the alignment really begins in K–2. Even when students are drawing or dictating, we’re building the foundation for consistent, high-quality communication.

Ready to produce rapid, measurable achievement gains in student writing outcomes?

WestEd’s Formative Writing Framework is a dynamic writing instruction and assessment system that empowers educators to align resources and writing instruction with the cognitive demands of state or district literacy standards and assessments. Through engaging writing performance tasks that mirror the structures, formats, and genre balance found in high-stakes assessments, students are prepared for real-world academic challenges.