What Do We Value? Aligning Standards, Assessments, and Curricula

Posted on

By Angela D. Bowzer and Mitchell E. Clarke

Angela D. Bowzer and Mitchell E. Clarke are both part of WestEd’s Assessment Research and Innovation team, with Bowzer serving as a Research Scientist and Clarke as a Research Assistant.

Values have been defined as “principles, fundamental convictions, ideals, standards or life stances which act as general guides to behavior or as points of reference in decision-making or the evaluation of beliefs or action” (Halstead, 1996, p. 3). Applying this definition in an assessment context, we can consider the framework through which decisions are made to signal what is valued in the assessment process. More specifically, we can consider the identification of components of an assessment system for evaluation as signaling what relationships within the system are valued most. What do the typical foci of alignment studies tell us about the value of the components of an assessment system?

Alignment of educational systems–learning standards, instruction, and assessments–is crucial to ensuring that the inferences made about what students know and can do based on evidence gathered through assessments are accurate (Cizek et al., 2018). With each reauthorization of the Elementary and Secondary Education Act (ESEA, 1965) comes changes to the components of an educational system that need to be shown to be aligned. Beginning with the earliest alignment studies and continuing through today, the policy contexts within which alignment of educational systems are examined is ever-changing. Federal requirements frequently shift, requiring a change in the alignment review process or a component of the educational system needing to be reviewed. State policies also shift, with some states requiring rigorous high school exit exams and others administering broad measures of college and career readiness (Ananda, 2003).

While assessments and standards have evolved over time, the most commonly used methods for assessing the alignment of assessments and standards to one another have only modestly been adapted to meet changing needs (Polikoff, 2020). Research does suggest that some efforts have been made toward advancing alignment methodologies, with a handful of recent studies describing new methodologies given the challenges of applying existing methods to new assessments and innovative ways of conducting alignment studies. Additionally, new processes utilizing technology have made conducting alignment studies more efficient, and more agile, to address these changing needs.

Norm Webb pioneered the systematizing of alignment work by developing a method to assess the degree of alignment between assessments and standards (1997, 1999). In the original methodology, Webb presented 12 criteria for evaluating alignment that addressed five general categories: content focus, articulation across grades and ages, equity and fairness, pedagogical implications, and systems applicability to judge alignment (Webb, 1997). The breadth of the categories of focus in this methodology signaled that more than subject matter was valued in the process of evaluating the relationship between the components of an assessment system.

Within a span of two years, Webb’s initial 12 criteria were reduced to four criteria that focus on the degree of alignment between test items and standards (Webb, 1999). While these four criteria are currently the most common foci of modern alignment studies (e.g., Webb & Smithson, 1999; Lombardi, 2006; Webb et al., 2006), they limit alignment evaluations to two components of a multi-faceted system. The reduction in the categories of focus in traditional alignment evaluations suggests that test items and standards are the components critical to an effective assessment system.

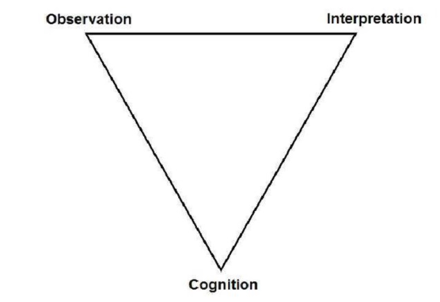

The assessment triangle shown in Figure 1 (National Research Council, 2001) provides a simple, yet powerful, image. The vertices of the triangle are labeled “cognition,” “observation,” and “interpretation,” and the sides connecting the vertices indicate associations among the three interconnected components of an assessment system. These associations suggest the importance of the relationships between what students know (cognition), how evidence of what students know is gathered (observation), and the meaning attached to results from the analyses of the evidence gathered (interpretation).

Figure 1. The Assessment Triangle

An alignment evaluation that focuses on the relationship between test items and standards is limiting, since “[a]lignment is about coherent connections across various aspects within and across a system and relates not simply to an assessment, but to the scores that assessment yields and their interpretations” (Forte, 2017, p. 3).

An alignment approach that extends to all sides of the triangle considers the degree to which the components of an assessment system (content standards, measurement targets, test specifications and blueprints, performance level descriptors, and test items) are in alignment and match the intentions defined by the assessment design and implementation procedures.

Results from an alignment approach that addresses the relationship between the interpretation of student performance and the expectations established during the assessment design and development process provide states and organizations with information that can be used to refine existing processes and strengthen connections between the components of the assessment system, and validity evidence about the assessment system as a whole. Methodologies that expand the foci of traditional alignment studies in this way signal the importance of each component of an assessment system to its success—each component is valued for the contribution it brings.

One novel way with great potential for expanding the foci of traditional alignment studies has been the incorporation of technology such as natural language processing, machine learning, and newer artificial intelligence (AI) tools. These innovative tools have already been used to make alignment processes more efficient through the fuzzy matching of text or making preliminary alignments between comparable criteria (e.g., matching a standard to a standard). In the future, technology offers remarkable promise, not only to the automation of preliminary alignments, but to conceptual matching of criteria (e.g., aligning a test item to a depth of knowledge level). By leveraging these technologies, and technologies that have yet to be developed, not only can the processes involved in an alignment evaluation become even more efficient, but previously omitted components can be consistently included in the evaluation process, resulting in a more holistic alignment evaluation.

To this point we have yet to discuss how curriculum fits into an alignment evaluation. Consideration of how the content of standards is learned by students is often not considered when evaluating the design and implementation of an assessment system (a previously omitted component referenced previously, especially in the context of federal peer review). Around the same time as Webb’s alignment method was gaining momentum, a method that considered instruction as a component of evaluation was developed. The Surveys of Enacted Curriculum (SEC, Polikoff et al., 2011; Porter, 2002) gathers information about standards, assessments, and instruction (Martone & Sireci, 2009), considering the alignment between what is taught in the classroom, what is assessed, and the level of cognitive demand for relevant tasks (Roach et al., 2005). Since curricula are aligned to standards to varying degrees, and instruction impacts student opportunity to learn, exploring the relationship between curriculum, instruction, and assessments helps to contextualize student achievement (Vockley & Lang, 2009).

The instructional component of the SEC analysis is provided through teacher self-report data describing teaching practice and instructional materials used; therefore, accurate reporting by teachers is essential to the results of the evaluation (Porter, 2002). Each component of the analysis (curriculum, instruction, and standards) is rated by content and cognitive demand, allowing for comparisons of the intended curriculum, the enacted curriculum, and the assessed curriculum (Forte, 2017).

The results of the analyses can be displayed in graphs similar to heat maps, allowing for visualization of alignment strengths (see Porter, 2002). These maps can provide information about instructional emphases and opportunity to learn in the evaluation of student achievement (Cizek et al., 2018). This consideration of opportunity to learn provides additional context for descriptions of what students know and are able to do provided in assessment score reports, and brings the alignment picture full circle.

So, what do we value? If we want to provide opportunities to strengthen the relationship between all of the components of an educational system, then each component needs to be considered in the alignment evaluation process. This means utilizing the knowledge and tools that we have to provide states with this opportunity by creating a cost-effective methodology for examining each relationship and providing recommendations for ways to strengthen the connections in ways that benefit students. Because in the end, the students are who we value.

References

Ananda, S. (2003). Rethinking issues of alignment under No Child Left Behind. WestEd.

Cizek, G. J., Kosh, A. E., & Toutkoushian, E. K. (2018). Gathering and evaluating validity evidence: The generalized assessment alignment tool. Journal of Educational Measurement, 55(4), 477-512.

Forte, E. (2017). Evaluating alignment in large-scale standards-based assessment systems. Council of Chief State School Officers.

Halstead, J. M. (1996). Values and value education in schools. In J. M Halstead & M. J. Taylor (Eds.), Values in education and education in values (pp. 3–14). Falmer Press.

Lombardi, T. J. (2006). An alignment study of the Minnesota Comprehensive Assessment-II with state standards in mathematics for grades 3-8 and 11. Minnesota Department of Education.

Martone, A., & Sireci, S. G. (2009). Evaluating alignment between curriculum, assessment, and instruction. Review of Educational Research, 79(4), 1332-1361. https://doi.org/10.3102%2F0034654309341375

National Research Council. (2001). Knowing what students know: The science and design of educational assessment. The National Academies Press.

Polikoff, M. S. (2020). The present and future of alignment. Educational Measurement: Issues and Practice, 39(2), 18-20.

Polikoff, M. S., Porter, A. C., & Smithson, J. (2011). How well aligned are state assessments of student achievement with state content standards? American Educational Research Journal, 48(4), 965-995. https://doi.org/10.3102%2F0002831211410684

Porter, A. C. (2002). Measuring the content of instruction: Uses in research and practice. Educational Researcher, 31(7), 3-14.

Roach, A. T., McGrath, D., Wixson, C., & Talapatra, D. (2010). Aligning an early childhood assessment to state kindergarten content standards: Application of a nationally recognized alignment framework. Educational Measurement: Issues and Practice, 29(1), 25-37.

Vockley, M., & Lang, V. (2009). Three approaches to aligning the National Assessment of Educational Progress with state assessments, other assessments, and standards. The Council of Chief State School Officers.

Webb, N. L. (1997). Criteria for alignment of expectations and assessments in mathematics and science education (Research monograph no 6). Council of Chief State School Officers.

Webb, N. L. (1999). Alignment of science and mathematics standards and assessments in four states (Research monograph no 18). Council of Chief State School Officers.

Webb, N., & Herman, J., & Webb, N. (2006). Alignment of mathematics state-level standards and assessments: The role of reviewer agreement (CSE Report 685). National Center for Research on Evaluation, Standards, and Student Testing (CRESST).

Webb, N. L., & Smithson, J. (1999). Alignment between standards and assessments in mathematics for grade 8 in one state [Paper presentation]. AERA, Monteal, Quebec, Canada.