Student Reactions to Culturally Responsive Mathematics Assessment Items

Posted on

By Molly Faulkner-Bond and Priya Kannan, Senior Research Associates at WestEd. Faulkner-Bond has built her career around understanding and improving policies, assessments, and programs for students identified as English Learners and amplifying that knowledge for the benefit of all students and educators. Kannan brings her expertise in the area of score reporting that comes from several years of foundational and innovative research in standard setting and score reporting.

In the last few years, the recommended best practice (by the AERA, APA, NCME Standards for Educational and Psychological Testing, 2014) of upholding neutrality in assessment items has been questioned by researchers. There has been increasing evidence that item formats, item scenarios, scoring rubrics, and even the ways in which we define relevant academic content itself generally center perspectives and ways of knowing associated with whiteness (e.g., see Randall et al., 2022; Solano-Flores & Nelson-Barber, 2001).

In light of these findings and the large and persistent achievement gaps between White students and other groups, it is possible that White students have an inherent advantage while taking assessments due to encountering content and scenarios that are familiar to and comfortable for them. While it is unlikely that this difference alone is responsible for race-based achievement gaps, addressing it could still serve as a helpful strategy to address inequities in access and achievement, particularly if paired with efforts in other areas.

In response to this shift in how we think about assessment items, WestEd and an external research partner conducted a series of studies to understand whether the inclusion of culturally and linguistically responsive (CLR) text and/or images in assessment items results in increased student engagement with the items. Across a couple of studies that we have conducted over the past two years, we have been working together to try to hear from students directly about what they notice about assessment items, what they like and dislike, and whether they notice or care about cultural content in assessment items.

In focus groups we conducted with students (from a variety of racial backgrounds, in grades 3–8) last year, we found that kids did like giving feedback, but that only older students noticed CLR content, and all students were most focused on items they could solve quickly and efficiently and preferred test items to be “short and simple.”

Current Study

Our most recent project built on the previous findings in multiple ways. We decided to target middle school students (grades 6–8) and focus specifically on mathematics items in the current study. And since we found that students placed a high value on item length and complexity, we focused on developing items that were truly parallel in terms of length, complexity, and level of reasoning required to solve the problem and varied the items only on the cultural context.

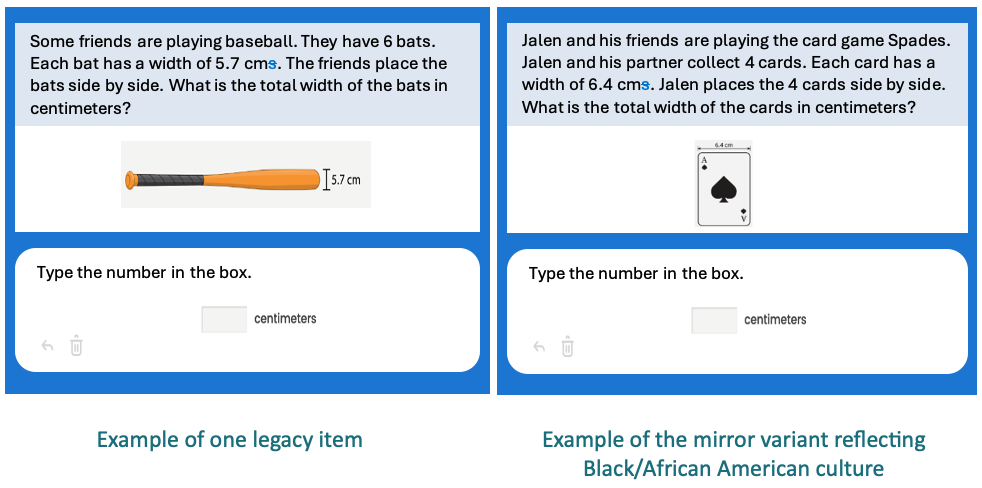

We decided to focus on two underrepresented groups in this study—students who identify as Black or African American and students who identify as Hispanic or Latinx—so that we could design items specifically representing aspects of these cultures and ensure students saw some items that might align with their stated cultural identities. In collaboration with our research partner, we identified 10 middle school mathematics items from their existing item set (we called these legacy items).

We decided to focus on two underrepresented groups in this study—students who identify as Black or African American and students who identify as Hispanic or Latinx—so that we could design items specifically representing aspects of these cultures and ensure students saw some items that might align with their stated cultural identities. In collaboration with our research partner, we identified 10 middle school mathematics items from their existing item set (we called these legacy items).

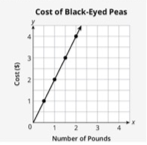

For each of these legacy items, we developed one Black and one Hispanic cultural variant—developed to be truly parallel to the original in terms of length and complexity and reflecting Black and Hispanic cultures (we called these “mirror” items to signal that they are designed to reflect familiar content back to students, even if they do not explain that content to viewers who are not familiar with it)—resulting in a total of 30 items. We then distributed these items across forms (10 items each) that included different combinations of legacy and mirror items (see one example item below).

Among other research questions, we wanted to specifically evaluate (a) whether students self-report differently about their affect, comfort, and engagement/interest when the items more closely match or reflect their own racial or cultural identities and (b) whether students notice or react to cultural content when two items differ only on this front (and not on length, complexity, difficulty, item format, and other features).

FOCAL RESEARCH QUESTIONS:

- Do students self-report differently about their affect, comfort, and engagement/interest when the items more closely match or reflect their own racial or cultural identities than when they do not match their racial or cultural identities?

- Do students notice or react to cultural content when two items differ only on this front?

We asked students to tell us what they noticed and what they liked about items in two ways: first, we asked them to review a set of items (10 total legacy and mirror items) independently and give us feedback in writing, then we also discussed the items in a focus group setting with up to four other students of different or similar racial identities. We engaged in an iterative process to identify emerging themes, and the following major themes emerged across all focus groups.

Major Themes in Student Reactions During the Focus Groups

There was no evidence that students felt uncomfortable with item scenarios or in mixed groups. Overall, students appeared to be comfortable with the inclusion of cultural content and with sharing feedback during mixed-group sessions. Students responded positively as they reflected on the sessions with lots of positive reactions (e.g., smiley faces, hearts) and use of positive descriptors to describe their time in the session (e.g., “comfortable,” “heard,” “seen”), which provided evidence that the study did not elicit negative feelings or discomfort. When asked to indicate how each item made them feel during the premeeting item review, we found that all students generally reported feeling “capable” (33%), “interested” (28%), and “smart” (24%) while answering items. Students also reported being “confused” (24%) by certain items at equal rates. However, Black students reported feeling “anxious” (19%), “recognized” (10%), and “left out” (7%) in response to some items at somewhat higher rates than White students.

There was no evidence that students felt uncomfortable with item scenarios or in mixed groups. Overall, students appeared to be comfortable with the inclusion of cultural content and with sharing feedback during mixed-group sessions. Students responded positively as they reflected on the sessions with lots of positive reactions (e.g., smiley faces, hearts) and use of positive descriptors to describe their time in the session (e.g., “comfortable,” “heard,” “seen”), which provided evidence that the study did not elicit negative feelings or discomfort. When asked to indicate how each item made them feel during the premeeting item review, we found that all students generally reported feeling “capable” (33%), “interested” (28%), and “smart” (24%) while answering items. Students also reported being “confused” (24%) by certain items at equal rates. However, Black students reported feeling “anxious” (19%), “recognized” (10%), and “left out” (7%) in response to some items at somewhat higher rates than White students.

Students enjoyed items that resonated with them personally. Students showed an overall preference for items that resonated with them personally, regardless of the culture associated with the content. For example, students found the concert item appealing and inviting because they played a musical instrument, or they found the baseball item appealing because they played baseball. Though there was some evidence that the CLR content (e.g., playing the card game Spades) resonated with some students during the focus groups, we did find more evidence during the premeeting work. Black/African American students chose more cultural variant items than White students, who equally chose legacy items and mirror items.

Story/context are best valued when presented in pictures and graphs. We found that some students were drawn to the story aspects of the assessment item and were curious to see what happened next. However, they still indicated that they would not like too much text in math items and preferred items with pictures and graphs over additional text. For example, one student said, “Ideally [an item] would neither be super long or short, and it wouldn’t be complicated. I like it when there’s a visualization because it keeps me interested.” Another student said: Efficiency first, context next.

Story/context are best valued when presented in pictures and graphs. We found that some students were drawn to the story aspects of the assessment item and were curious to see what happened next. However, they still indicated that they would not like too much text in math items and preferred items with pictures and graphs over additional text. For example, one student said, “Ideally [an item] would neither be super long or short, and it wouldn’t be complicated. I like it when there’s a visualization because it keeps me interested.” Another student said: Efficiency first, context next.

Finally, one recurring theme we observed was that students focused on the math content and treated the scenarios surrounding the math problem as an afterthought. Students had the tendency to view the test items through a lens of efficiency and clarity—they also tended to prefer easier items and showed evidence that they may find difficult content more stressful.

Where We Would Like to Go Next

Overall, although we were able to uncover some interesting themes to inform the development of assessment items that would be interesting and engaging to all students, there are some limitations to the current study that should inform future investigations. First, although efforts were made to recruit a diverse group of students, we struggled to recruit many students from diverse socioeconomic backgrounds—in particular, students who identified as Hispanic/Latinx. These challenges may have also stemmed from the fact that these focus groups were scheduled virtually, in the evenings, and during the school year.To overcome these challenges, we want to prioritize “in-person” or face-to-face work when we conduct follow-up investigations.

Second, we found that students preferred items that resonated with them personally and made them feel seen. We would therefore prioritize and recommend engaging students in a co-design process. Working with students directly will also allow us to engage with them as partners in coming up with interesting scenarios. We learned on the current project that item writers don’t always know what resonates with younger students. But, armed with scenario ideas from real students, item writers could then identify ways to infuse cultural and linguistic diversity and representations into the scenarios as they develop items.

Second, we found that students preferred items that resonated with them personally and made them feel seen. We would therefore prioritize and recommend engaging students in a co-design process. Working with students directly will also allow us to engage with them as partners in coming up with interesting scenarios. We learned on the current project that item writers don’t always know what resonates with younger students. But, armed with scenario ideas from real students, item writers could then identify ways to infuse cultural and linguistic diversity and representations into the scenarios as they develop items.

In closing, we feel that we have just scratched the surface in beginning to understand the development of culturally responsive assessment content. Our team at WestEd is excited and committed to center the voices of all students in supporting the equity and inclusion of all students through our work.

References

American Educational Research Association (AERA), American Psychological Association (APA), & National Council on Measurement in Education (NCME). (2014). Standards for Educational and Psychological Testing, Washington, DC: AERA.

Randall, J., Slomp, D., Poe, M., & Oliveri, M. E. (2022). Disrupting White Supremacy in Assessment: Toward a Justice-Oriented, Antiracist Validity Framework. Educational Assessment, 27(2), 170–178. https://doi.org/10.1080/10627197.2022.2042682

Solano-Flores, G. & Nelson-Barber, S. (2001). On the cultural validity of science assessments. Journal of Research in Science Teaching, 38(5), 553–573. https://doi.org/10.1002/tea.1018