Designing and Integrating Interactive Science Simulations for Large-Scale Assessment

Posted on

By Matt Silberglitt, Kevin King, and Sarah Quesen

Assessments aligned to multidimensional standards that integrate practices and content hold the promise of engaging students in doing science. But can assessments engage students in the depth and breadth of the practices? Computer simulations provide a key component of the answer.

A simulation engages students

- in science and engineering practices integrated with science content.

- in model-based reasoning and systems thinking; investigation and experimentation; designing solutions; solving complex problems; and reasoning from evidence.

- in scientific phenomena that enhance learning and assessment.

- in gathering and analyzing data at a scale that provides an opportunity to make sense of phenomena that can only be explained probabilistically.

Simulations can be key components of science instruction and assessment

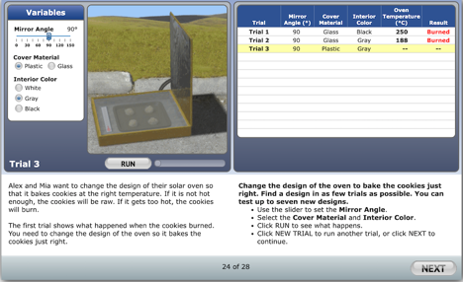

Simulations allow students to explore data; to observe results from scientific inquiry; and to reason from evidence to reach a deeper understanding of the often-unobserved, causal mechanism driving complex phenomena. An example of a simulation is shown in the figure below.

For this simulation-based activity, students are asked to manipulate both the angle of a mirror reflecting the sun and the characteristics of a solar oven in order to bake cookies without burning them. The results of the individual student’s trials are recorded. Students may be scored on their choice of the best model (Trial 1, Trial 2, or Trial 3) and/or may be scored on integrated items about what they learned in the process.

A cluster of integrated items around a simulation is often called an “item cluster” or a “task.” This article uses the term “task.” Tasks may provide context for the simulation and items that measure students’ science knowledge, skills, and abilities.

Another feature of simulation-based assessments is the accumulation of process data—the data generated by students’ actions while they investigate a phenomenon. Process data can be used to infer students’ understanding of how the system being studied functions. Some examples of process data include actions taken while developing a model; changes made to the design of a system; how many times students review their trials; or where students hover and click when solving a complex problem.

Although enticing, inferences about students’ understanding from process data must be made cautiously and combined with other sources of evidence. Inferences based solely on students’ actions in a simulation can be misleading because students’ exploration of a simulation may lead them to try things that fail.

To address this challenge, simulations should be used during classroom instruction and during large-scale assessment. It provides two distinct ways for students to interact with the simulation: one way is focused on exploring the simulation and the system it represents, and another way is focused on demonstrating their ability to use the simulation effectively. The latter is best done within an assessment task that provides clear direction for the student and clear expectations for their performance.

Simulations and assessment tasks in large-scale assessment

Large-scale science assessment can benefit from the inclusion of simulations. However, developing simulations for large-scale assessment involves considerations unique from simulations that are used during instruction. As stated in the Standards of Educational and Psychological Testing, “all steps in the testing process, including test design, validation, development, administration, and scoring procedures, should be designed in such a manner as to minimize construct-irrelevant variance and to promote valid score interpretations for the intended uses for all examinees in the intended population.”[1]

Effective use of simulations in assessment requires careful design that considers both how students interact with simulations and how simulations interact with the process of assessing students. An assessment task should provide a clear goal for a student’s use of the simulation, such as a model or set of conditions to investigate; a problem to solve; or a design goal to achieve. With a well-designed simulation, an assessment task can gather data from a student’s investigation and problem-solving processes, along with their answers to questions in the task.

Time for students to immersively engage with the simulation environment needs to be considered, as it can impact testing time. Also, a simulation typically needs to be focused on one or two standards, so other items in the task need to be used to expand the coverage of the standards. This potentially reduces opportunities for covering the breadth of the standards in an assessment. In large-scale assessment, students only have limited time to interact with a simulation, and the interactions need to be purposeful.

Because students have some degree of agency in interacting with the simulation interface, simulations allow for variation in the student experience in ways that can complicate scoring by impacting items directly linked to the simulation and by impacting items elsewhere in a task. Careful design and research are required to ensure that psychometric model assumptions are met in task-based, high-stakes assessment to ensure valid and reliable test scores.

Equitable use of simulations

It is critical in all aspects of education, but particularly in high-stakes, large-scale assessment, that scores are fair and equitable for all students. Because of their complex, often visual, presentation and interactive design, simulations present challenges for incorporating accessibility features and accommodations. Simulations must be developed in ways that are consistent with Universal Design for Learning (UDL) and Universal Design for Assessment (UDA).

In large-scale assessment in particular, simulations need to be intuitive and self-explanatory. Additional supports need to be accessible and understandable without being distracting. Also, modes of presentation and response need to be amenable to accommodations. Developing print and braille formats that require the same—not additional—cognitive demand and practice application needs to be considered while not constraining task development.

Accessibility and ease or comfort with technology environments needs to be considered from an equity perspective—equity in terms of access to and familiarity with technology. Equity concerns can be mitigated in assessment design by providing help tools embedded in a simulation or the assessment environment. However, time spent on getting help within a testing environment is time not spent on exploring the simulation or responding to questions. Help tools alone are insufficient; item developers should leverage the design and structure of the simulation environment to maximize access for all students.

Psychometric studies closely examining how students from historically marginalized populations; students from lower income households; students who are English learners; and students with disabilities are critical to ensure that all students are provided with the same opportunity to demonstrate their knowledge and skills on a novel assessment.

In addition, these new assessments provide an opportunity to ensure that the context and characters portrayed in the tasks are reflective of a wide range of students. A mixed-methods research approach—including focus groups and cognitive labs—allows assessment designers to be informed both by the data and by student voices.

Equity considerations are not unique to large-scale assessment, but it is in this context that they are most acute and most open to criticism, particularly for the challenges simulations present for opportunity and access for all students.

However, leaving simulations out of large-scale assessment could reduce opportunities that simulations provide in the classroom, should the exclusion from testing be interpreted as diminishing their importance for instruction. Therefore, we advocate for addressing the challenges presented by simulations through a coherent approach to simulations that begins with instruction and is tailored for each level of an assessment system in ways that support the purposes of assessments. This coherent approach

- supports the goals of valid and reliable, large-scale assessment. This way, students who experience simulations throughout instruction and assessment are able to use simulations to demonstrate what they know and can do on a large-scale assessment.

- supports the goal of accessibility. This way, accessibility features become familiar to students instead of presenting burdens or obstacles when they are encountered on a large-scale assessment.

- holds promise for a wider range of accommodations. This way, educators and instructional-materials developers who work with students that need accommodations are more likely to find ways to make simulations accessible. Assessment developers can then develop assessment tasks that match instruction, including the features of those tasks that make them amenable to accommodations.

Students have a range of experience with technology, including simulations, throughout their educational experience and have a range of comfort levels with those experiences, partly influenced by access to technology. Even as more schools have a 1:1 ratio of students per device, there are still students who do not have consistent access to technology that others think are commonplace (e.g., complex cell phones, home computers).

Simulation developers have the experience and research base to develop simulations that are accessible to most students. However, fulfilling the promise of simulations demands an environment in which all students can engage in science.

Assessments can engage students in the depth and breadth of the practices of science and engineering, and simulations are a key component of fulfilling that vision and promise. Thoughtful design and research are critical to achieving this, and the field is well on its way towards working on this goal.

Matt Silberglitt, Manager of Science Assessment with WestEd’s Assessment Design and Development team, manages activities for science assessment development projects. Kevin King is a Senior Project Leader in WestEd’s Assessment Design and Development team and a co-lead for the State Science Assessment Solutions team. Sarah Quesen is the Director of Assessment Research and Innovation at WestEd.

Footnotes

[1] SimScientists.org, supported by a grant awarded to WestEd from the National Science Foundation (DRL-1221614). Any opinions, findings, conclusions, or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the National Science Foundation.

[2] American Educational Research Association, American Psychological Association, National Council on Measurement in Education [AERA/APA/NCME] Standards for educational and psychological testing. Washington, DC: American Educational Research Association; 2014. (Rev. ed.), p63